What is an AI camera? How AI is changing photography and photo editing…

AI is the new buzzword in photography, but how do machine learning, neural networks and computational photography help us get better photos?

Artificial intelligence (AI) is everywhere, and if you haven't yet got an AI camera or AI-powered smartphone, you probably soon will. Even your phone's software claims to use AI to make decisions on your behalf. Adobe's Photoshop Camera uses AI to identify objects and scenes in your pictures and suggest 'lenses' (digital effects) for comic and creative impact. It’s in your ‘auto’ mode, enables that ‘bokeh’ blur you love so much, and even powers your smartphone’s newly impressive ‘night mode’. It’s so impactful that you could be forgiven for thinking that AI is taking over photography.

• See the Digital Camera World A-Z Dictionary of photography jargon

Is it all just marketing hubris, or is AI in a smartphone – and particularly, in its camera – something we should all aspire to have? And what even is an AI camera? With the term AI increasingly being used not only in camera phones, but in all kinds of cameras, it pays to know what AI is actually doing for your photos.

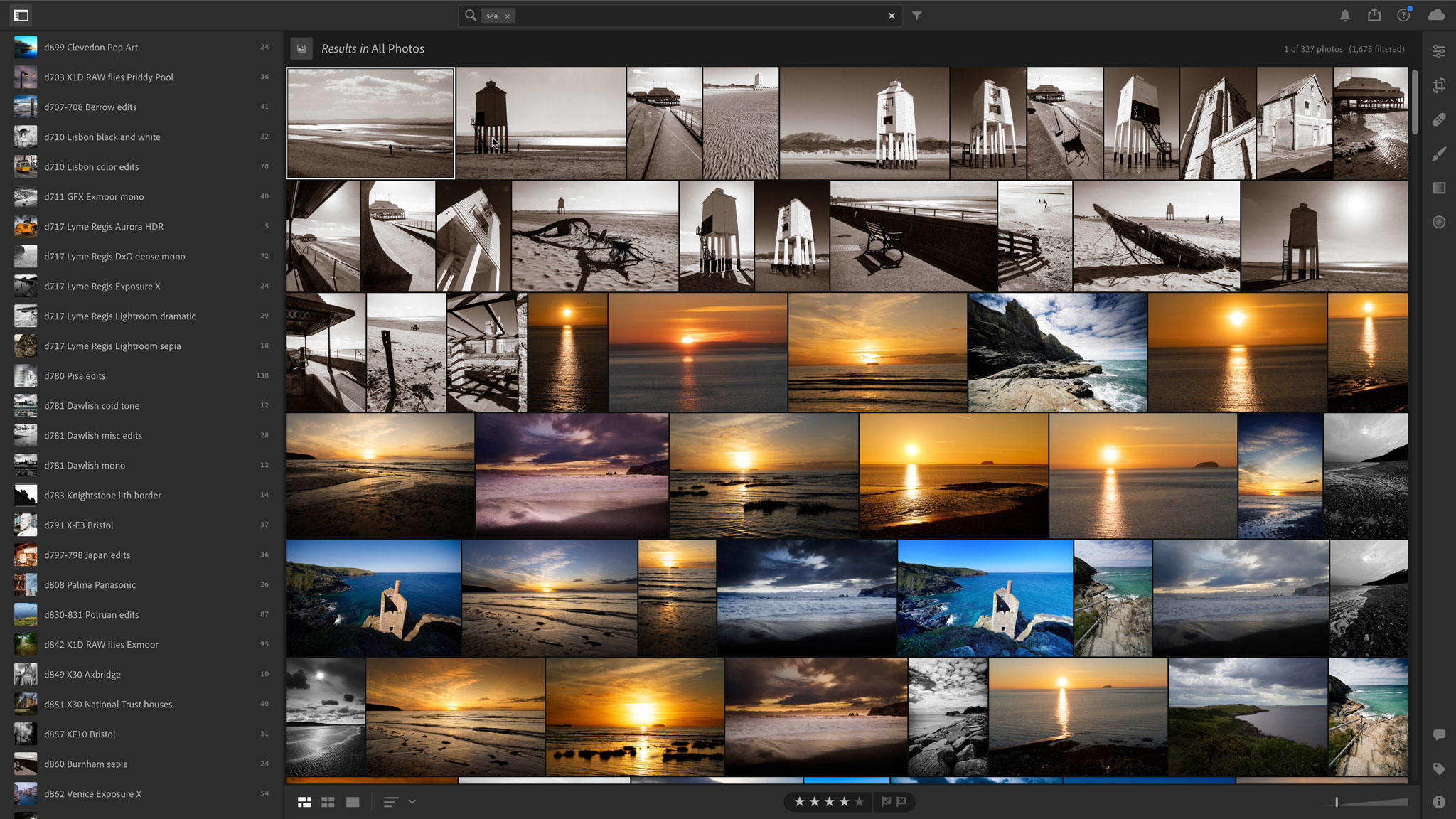

AI has blurred the boundaries between image capture, image enhancement and image manipulation. It is used in photo-editing, to meld, enhance and 'augment' reality, to make more intelligent object selections, to match processing parameters to the subject and to help you find the images automatically based on what's in your photos rather than manual keywords and descriptions. It is already looking at what you photograph and making its own decisions about how to handle it.

Welcome to the brave new world… of AI cameras.

What is AI?

AI is a genre of computer science that examines if we can teach a computer to think or, at least, learn. It's generally split into subsets of technology that try to emulate what humans do, such as speech recognition, voice-to-text dictation, image recognition, pattern recognition and face scanning.

There is a whole cluster of buzzwords around this topic. 'AI', 'deep learning', 'machine learning', computer vision’ and 'neural networks' are all intertwined in this new branch of technology.

The best camera deals, reviews, product advice, and unmissable photography news, direct to your inbox!

But what’s AI got to do with cameras? Computational photography and time-saving photo editing, that’s wha, and even voice-activation. AI is quickly becoming an overused term in the world of photography. Right now it largely applies to smartphone cameras, but the incredible algorithms and sheer level of automated software that the technology is allowing will soon prove irresistible to most of us. It may not be time to chuck out the DSLR quite yet, but AI seems set to change how we take photos. Not only that, but it could soon take charge of editing and curating our existing photography libraries too

Who is AI photography for?

Everyone. In fact, it’s mostly about making photography easier and more accessible. “In the past photography was the domain of those with the expertise of using a DSLR to create different types of images, and what AI has started to do is to make the effects and capabilities of more advanced photography available to more people,” says Simon Fitzpatrick, Senior Director, Product Management at FotoNation, which provides much of the computational technology to camera brands.

Nowhere is that truer than with AI photo editing, which allows all levels of photographer to effortlessly replace the background, perform color correction and overall improve photos in an instant. “It streamlines the editing experience and allows everyday users to become expert photo editors,” says William Wang, Product Manager at smartphone-maker Infinix.

What is an AI camera?

AI cameras think and learn about settings and image processing. “Smartphones equipped with powerful AI processors are able to quickly and automatically recognise what is in a scene, as well as automatically differentiate between foreground subjects and backgrounds,” says Wang. AI cameras can automatically blend HDR images in bright light, switch to a multi-image capture mode in low light and use the magic of computational imaging to create a stepless zoom effect with two or more camera modules.

‘Auto’ mode comes of age

It can be difficult to separate true AI from sophisticated automation. For years, compact camera makers have been offering different subject orientated scene modes which can be chosen automatically by the camera. Is that 'intelligence', or simply a slightly more advanced implementation of exposure measurement, subject movement and focus distance? Multi-pattern metering systems typically use a complex measurement of light distribution based on thousands of real-world photos and have been using a 'deep learning' process before the term had been invented.

Cameras have always used algorithms – that’s what ‘auto-mode’ is – but now comes ‘real’ AI. “It was pretty obvious that ‘classical’ AI algorithms such as auto exposure, auto white balance and auto focus would get a huge boost thanks to machine learning and deep neural networks,” says Jérôme Abribat, Communication and Content Marketing Director at DxO. That auto-mode is now getting smarter is hardly surprising. “What is more surprising is that deep neural networks work so well that they started to replace algorithms that, until very recently, were never associated with AI, such as demosaicking and de-noising.”

Voice-activated cameras

The ability for a computer to understand human speech is a simple form of AI, and it's been creeping onto cameras for the last few years. Voice control has featured on a number of GoPro models, starting as far back as the HERO5 (above) and carrying through to the latest GoPro HERO9 Black. Even dash cams able to take actions when you utter simple phrases such as 'start video', 'take photo' and so on.

It all makes sense, especially for action cameras where hands-free operation makes them much easier to use but is it really AI? Technically, it is, but until recently voice-activated gadgets were simply referred to as 'smart'. Some now allow you to say quite specific things such as ‘take slow-motion video’ or ‘take low-light photo’, but an AI camera needs to do a little more than that be worthy of the name.

AI software in smartphones

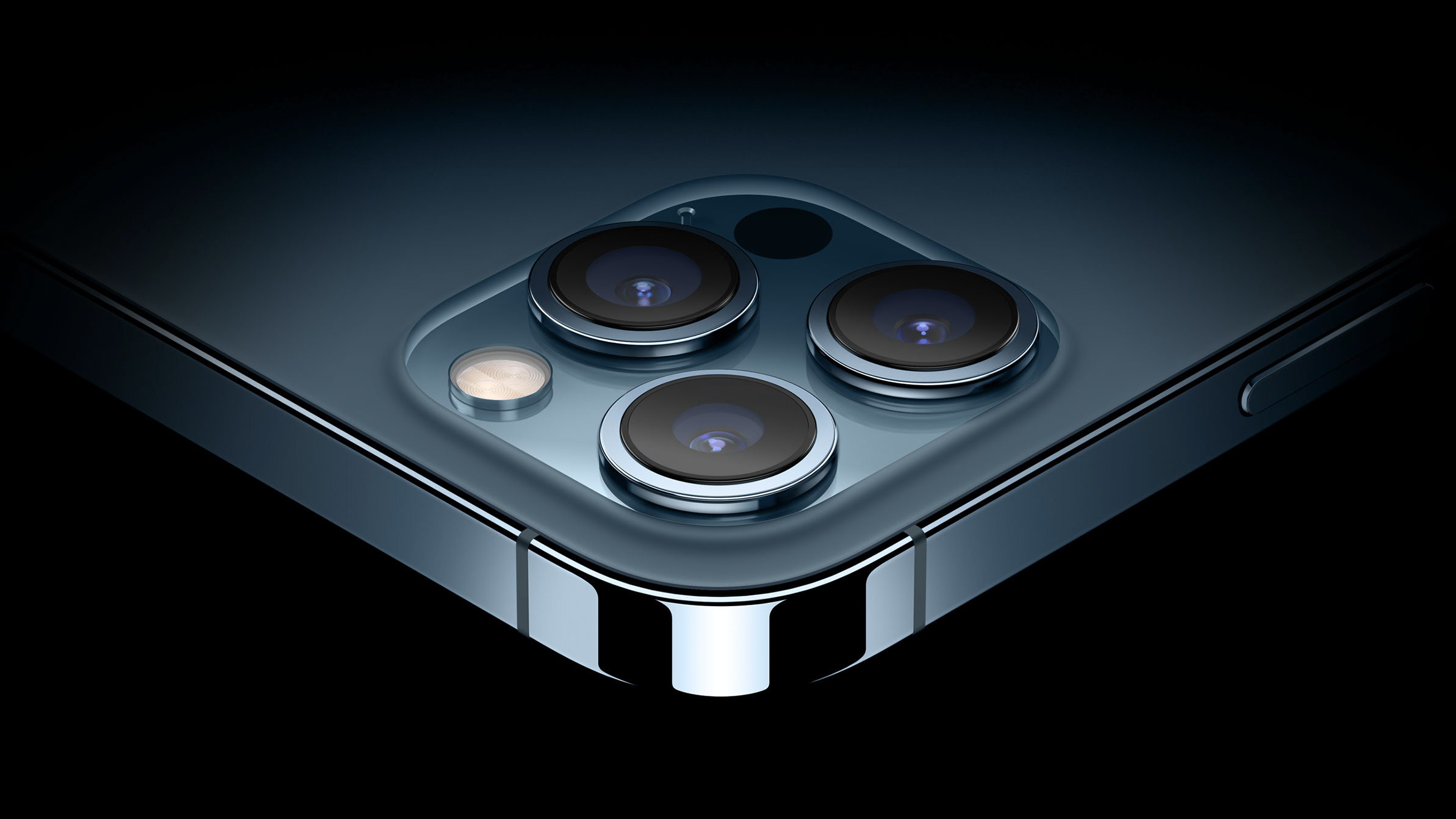

AI is mostly about new kinds of software, initially to make up for smartphones’ lack of zoom lenses. “Software is becoming more important for smartphones because they have a physical lack of optics, so we’ve seen the rise of computational photography that tries to replicate an optical zoom,” says imaging analyst Arun Gill, Senior Market Analyst at Futuresource Consulting. “Top-end smartphones are increasingly featuring dual-lens cameras, but the Google Pixel 5 uses a single camera lens with computational photography to replicate an optical zoom and add various effects.”

Multi-camera arrays and computational imaging have long since merged to produce a hybrid technology that replicates many of the depth of field and lens effects you get from larger cameras. A camera phone is no longer 'just' a camera. It's a calculating, analysing, 'thinking' device that doesn't just capture the scene as it is, but how it thinks you want it to be, or how it thinks you ought to want it to be...

AI can be like having a know-all assistant. After a while you might start to wonder who is actually in charge.

What is computational photography?

Computational photography goes beyond hardware – the lens and sensors of a camera – and uses machine vision software to enhance what photos are captured. It’s essentially about improving the automatic settings for better point-and-shoot photos. “Smartphones offer a lot of processing power to support these image processing algorithms and improve images by reducing motion blur and adding simulated depth of field, as well as improving color, contrast and light range,” says Wang. “Adding AI has pushed the capabilities of computational photography to the next level by adding a broad set of smart photography features such as automatically retouching them and adjusting settings.”

As such it makes the studio effects typically achieved when using Adobe Lightroom and Photoshop accessible to people at the click of a button. “So you're able to smooth the skin and get rid of blemishes, but not just by blurring it – you also get texture” says Fitzpatrick. In the past, the technology behind ‘smooth skin’ and ‘beauty’ modes has essentially been about blurring the image to hide imperfections. “Now it’s about creating looks that are believable, and AI plays a key role in that,” he adds. “For example, we use AI to train algorithms about the features of people's faces.”

Simulating ‘bokeh’ blur

In recent years we've seen many multi-lens phone cameras use two or more lenses to produce aesthetically pleasing images that have a blurry background around the main subject. People (and, therefore, Instagram) love blurry backgrounds, but instead of using dual-lens cameras or picking up a DSLR and manually manipulating the depth of field, AI can now do it for you.

Commonly called the 'bokeh' effect (Japanese for blur), machine learning identifies the subject, and blurs the rest of the image. “We can now simulate bokeh using AI-based algorithms that segment people from foreground and background, so that we can create an effect that begins to look very much like a portrait taken in a studio,” says Fitzpatrick. The latest smartphones allow you to do this for photos taken with either the rear or the front (selfie) camera.

“People refer to it as bokeh, but you don’t get the true blur you get with a DSLR where you can change the depth; with a phone, you can only blur the background,” says Gill. “But a small and growing number of photographers are really impressed with it and are using an iPhone X for everyday capture, and only when they’re on professional jobs will they get out their DSLR.”

What is LiDAR and time-of-flight (ToF)?

Apple’s new iPhone 12 series of smartphones have a LiDAR laser and scanner. Why? Standing for Light Detection and Ranging, LiDAR uses lasers to calculate exact distances and depths. It’s typically found in robotic vacuum cleaners and will one day enable driverless cars. For iPhone photography it’s used primarily to improve autofocusing speed, particularly in low light.

Standing for ‘time of flight’, a ToF camera uses infrared light to create a depth map of the surrounding environment at the speed of light. Only after objects in a composition have been depth-mapped can AI software create a ‘bokeh blur’ look by defocusing the background of an image. “A ToF camera on a smartphone allows the device to separate different objects,” explains Wang, whose company Infinix recently launched its Hot 10S series of smartphones with such AI-powered features. For example, when taking a photo of an individual in a public space, the ToF camera will be able to recognize that an individual is not part of the crowd. “This makes it easier to add a bokeh effect to the background of the image,” says Wang. “The main takeaway is that a ToF camera allows the user more control over how the subject of a picture is displayed.”

What about AI on DSLRs and mirrorless cameras?

Automatic red-eye removal has been in DSLR cameras for years, as has face detection and, lately even smile detection, whereby a selfie is automatically taken when the subject cracks a grin. All of that is AI. Will the likes of Nikon and Canon ever adopt more advanced AI for their flagship DSLRs? After all, it took many years for WiFi and Bluetooth to appear on DSLRs.

While we wait, a Kickstarter-funded ‘smart camera assistant’ accessory called Arsenal wants to fill the gap. “Arsenal is an accessory that allows the wireless control of an interchangeable-lens camera (e.g. a DSLR) from a mobile device, with machine learning algorithms used to take the perfect shot,” says Gill. “What it’s doing is comparing the current scene with thousands of past images, using image recognition to recognize a specific subject and applying the correct settings, such as a fast shutter speed if it recognizes wildlife.”

Canon, meanwhile, has leaned heavily on AI technology for the cutting edge autofocus system in the EOS-1D X Mark III. Or, to be more precise, 'deep learning'. The complexity of the algorithms is the same (the system is trained using professional photographs) but deep learning is the end result... artificial intelligence is the ability for a machine to keep learning on its own. Meanwhile, its new Canon PowerShot Pick uses AI to detect, recognize, follow and photograph subjects in a scene completely autonomously.

What about Adobe and AI?

So does the advances in AI mean Adobe’s Photoshop and Lightroom will soon be defunct? Absolutely not; AI is a critical tool in making photo editing more automated. In fact, the latest update to Adobe Photoshop gives desktop photo editors an instant portrait effect similar to that of the 'portrait mode' found on camera phones. The update includes a new neural filter called depth blur that lets photographers choose different focal points in their images and blurs the background intelligently, in doing so creating a bokeh effect similar to using a fast portrait-length lens. Meanwhile, one of FotoNation’s partners is Athen Tech, whose ‘perfectly clear’ AI-based technology carries out automatic batch corrections that mimic the human eye. A plugin for Lightroom, it’s specifically aimed at reducing how long photographers sit in front of computers manually editing. “Professional photographers make money when they’re out taking photos, not when they’re processing images,” says Fitzpatrick. “AI makes professional-looking creative effects more accessible to smartphone users, and it helps professional photographers maximise their ability to make a living.”

What is Adobe Sensei AI?

Adobe Sensei uses AI and machine learning (ML) to make essential edits quick and easy and intelligently automate editing for photos. “Users can add movement and dimensions, adjust the position of a person or object, make isolated edits and more, all with a simple click,” says Wang. “The application easily automates the more time-consuming parts of the editing process, allowing the user to spend more time on the creative side of a project.”

For example, Adobe’s Lightroom CC and Photoshop Elements use Adobe's server-based Sensei AI object recognition system to identify images by subject matter so that you no longer have to spend hours manually adding keywords. Sensei AI also facilitates features such as skin smoothing, selecting a subject automatically or colorizing an old black-and-white photo with a single click. Meanwhile, Sensei AI’s Auto Creations can scan images and automatically apply some quick edits.

What is Skylum Luminar AI?

Designed to take the tedium out of photo editing, Skylum Software’s Luminar AI is an AI-driven approach to image enhancement. “Luminar was the first image editor fully powered by AI and removes the need for expensive editing software, making it easier for everyday consumers to pick up the basics of photography,” says Wang. “The software provides a slimmed-down editing experience for beginner photographers/editors to hone in their editing skills by taking out the guesswork.”

Perhaps its most impressive AI-driven feature is AI Sky Replacement, which makes it very simple to completely replace the sky in an image, eliminating the need for manual masking. Called AI Augmented Skies, it can autonomously add clouds, planets, lightning and more to your images, and even reflections to water. Luminar AI also contains portrait enhancement tools that autonomously identify human features while its AI Structure feature adds definition only to those areas of a picture where it's appropriate.

What is DxO DeepPRIME?

DxO PhotoLab 4 lets photographers make selective adjustments to Raw format files so detail can be restored. The brains behind its ability to reduce artifacts such as color noise and color fringing is its machine learning DeepPRIME AI technology. “DxO DeepPRIME AI belongs to a class of neural networks called convolutional neural networks, the structure of which is directly inspired by neuroscientific research on the human brain,” says Abribat. “The computer is allowed to determine the values of millions of parameters within the network, hence the term automatic learning, based on a vast database of carefully chosen input and output example images.”

As with all machine learning, the dataset is king. Based upon data DxO’s own measurements of the distortion, vignetting, chromatic aberrations, loss of sharpness, and the digital noise present in RAW images for over 60,000 combinations of lenses and equipment, the database provides the DxO DeepPRIME network with several billion samples to learn from.

Deepfakes: the dark side to AI and photography

The use of augmented reality in photography is controversial. Ever since the invention of image editors it's been possible to distort, twist and 'invent' reality, but it’s now feeling the growth in 'deepfakes’, which are AI-manipulated images and photos. What’s real and what’s not? It’s a growing problem, but even here AI itself comes to the rescue.

Adobe Photoshop now contains a feature called Face Aware Liquify, which reveals and corrects unwanted distortions in an image. Another example of a deep learning algorithm that can unmask the dirty work of AI algorithms is Microsoft Video Authenticator, which analyses images and videos and is trained to detect evidence that they’ve been manipulated.

So does the increase of use of AI in photography and videography mean you should be more suspicious of photos and videos? Yes, but the flip side is that AI is making slick-looking photography accessible to all.

Are we ready for AI cameras?

The world is not necessarily ready for the full implications of AI cameras. Google used AI on its Google Clips wearable camera, which used AI to capture and keep only particularly memorable moments. It used an algorithm that understood the basics about photography so it didn’t waste time processing images that would definitely not make the final cut of a highlights reel. For example, it auto-deleted photographs with a finger in the frame and out-of-focus images, and favored those that comply with the general rule-of-thirds concept of how to frame a photo.

Creepy and controlling? Some thought so. In any event, Google pulled the camera in 2019. The question is not whether AI is powerful enough to do the things we want, but whether we're quite ready yet to hand so much power over to a machine... or to the company that owns and operates the AI algorithms behind it.

What will AI do in the future?

AI is changing photo editing so fast, but it’s barely got started. What comes next is anyone’s guess, but there are hints. Google Research and the University of California Berkeley published a paper about their new AI technique that can remove unwanted shadows from snapshots in less than ideal lighting conditions.

Another likely advance led by AI is ‘night video’. While ‘night mode’ has been all the rage for smartphone cameras in the last few years, video at night remains disappointing. Expect that to change; Xiaomi’s Mi 11 smartphone – based on Qualcomm’s Snapdragon 888 chipset (which contains a Neural Processing Unit) – sports BlinkAI’s ‘Night Video’ mode. It works by applying machine learning algorithms to noisy video frames.

Expect composition to change, too, as a direct result of ever-increasing resolution. The latest cameras from Sony, Canon and Nikon all shoot in 8K at 30 frames per second, so we could just film and then let the AI pick and reframe the best moments,” says Abribat. That’s something that’s already happening in the world of 360º cameras.

Resolution advances aside, the future of AI and photography is mostly going to be about software, not hardware. “As new generations of smartphones are developed, the focus on enhancing the quality of camera hardware will become a smaller part of the equation and will be replaced with advancements in AI software,” says Wang. He thinks that smartphone cameras equipped with AI could catch up to – or even overtake – existing high-end cameras.

AI may be an over-hyped term and often a shorthand for what is nothing more than the latest, greatest advanced software, but AI does promise to do something incredible for photographers; it’s going to increases productivity, both for the software and for the photographer, freeing-up more of your time so you can take more, and better, photographs.

Read more:

• The best camera phones you can buy today

• The best photo editing software right now

• How to download Photoshop

• How to download Lightroom

Jamie has been writing about photography, astronomy, astro-tourism and astrophotography for over 15 years, producing content for Forbes, Space.com, Live Science, Techradar, T3, BBC Wildlife, Science Focus, Sky & Telescope, BBC Sky At Night, South China Morning Post, The Guardian, The Telegraph and Travel+Leisure.

As the editor for When Is The Next Eclipse, he has a wealth of experience, expertise and enthusiasm for astrophotography, from capturing the moon and meteor showers to solar and lunar eclipses.

He also brings a great deal of knowledge on action cameras, 360 cameras, AI cameras, camera backpacks, telescopes, gimbals, tripods and all manner of photography equipment.