iPhone 15s might have stacked sensors, but what are they and why should you care?

What is a stacked sensor and will it really improve your mobile photos and videos on the new iPhone 15?

The best camera deals, reviews, product advice, and unmissable photography news, direct to your inbox!

You are now subscribed

Your newsletter sign-up was successful

The iPhone rumors never stop and, with the announcement of the iPhone 15 range expected to take place in September, everyone is eagerly anticipating what these new models might bring to the table.

Apple's iPhones have consistently been some of the best camera phones available, pushing mobile photography and video further than ever, and no one expects the ball to be dropped now. There has been a lot of speculation around exactly what camera sensors Apple might use for its next iPhones, with rumors already surfacing that the company might introduce its first periscope camera on the iPhone 15 Pro Max model.

Thanks to respected Apple tipster Ming-Chi Kuo, we have learned that the iPhone 15 and iPhone 15 Plus might be getting stacked rear sensors, with these sensors being expanded across all iPhone 16 models next year. This all sounds great, but what exactly is a stacked sensor?

Article continues belowWhat is a stacked sensor?

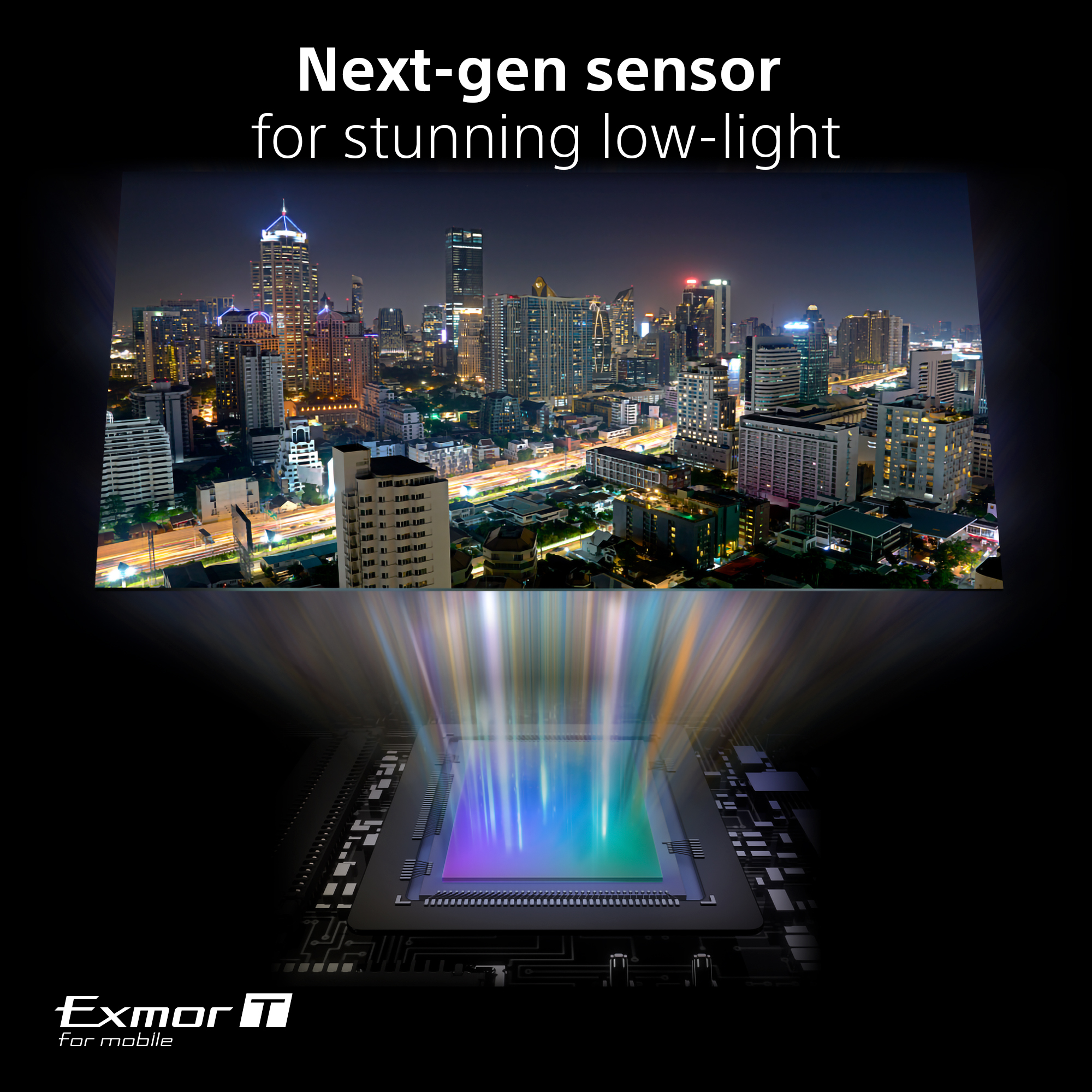

A slightly complex question, but the simplified version is that a stacked sensor is two layers, consisting of a photodiode and phototransistor that are stacked on top of one another, enabling the sensor to capture more light, with a raft of other benefits like lower noise and better color accuracy. Stacked sensors can also read sensor data faster and more efficiently, which can mean faster shutter speeds or less image distortion of fast-moving subjects.

All of these should have huge benefits with Apple's next generation of low-light photography, computational photography like cinematic blur, as well as all-round better image and video quality. Stacked sensors have been used a lot in high-end mirrorless cameras like the Canon EOS R3, Sony A1 and Nikon Z8 / Z9, but haven't yet made a huge impact on mobile devices.

Sony recently included a stacked sensor in its Sony Experia 1 V phone; using its own customer-designed Exmor T mobile sensor, Sony claimed that it is twice as good in low light as before. Although Apple likes to design as much of its phone's components as it can, Apple still buys its camera sensors from Sony (as do most other companies) so there is a high likelihood that we will see a similar sensor to the stacked design from Sony's flagship phone.

Remember though that the sensor is just a tool, and Apple adds layers of its own computational photography and image processing, so we really don't know what Apple's cameras will be capable of until we see them for ourselves in a few months!

The best camera deals, reviews, product advice, and unmissable photography news, direct to your inbox!

Find out more about the latest Apple rumors, and you can also check out our recommendations for the best iPhone for photography, and the best phone for video recording and vlogging.

Gareth is a photographer based in London, working as a freelance photographer and videographer for the past several years, having the privilege to shoot for some household names. With work focusing on fashion, portrait and lifestyle content creation, he has developed a range of skills covering everything from editorial shoots to social media videos. Outside of work, he has a personal passion for travel and nature photography, with a devotion to sustainability and environmental causes.