New Google Pixel 4 leak: motion blur, audio zoom, dynamic depth, Live HDR

The latest Google Pixel 4 leak shows that the camera is packed with new features, including a new motion blur mode

The best camera deals, reviews, product advice, and unmissable photography news, direct to your inbox!

You are now subscribed

Your newsletter sign-up was successful

As has become tradition, Google Pixel 4 leaks continue to come thick and fast ahead of the phone's release next month. And the latest details reveal that the camera phone will pack some exciting new features such as motion blur mode, audio zoom, dynamic depth data and Live HDR.

The new modes show Google's continued innovation when it comes to phone photography, particularly in the software-driven side of imaging where the battle is increasingly being fought.

• Read more: Best camera phones

Article continues belowIndeed, while the iPhone 11 finally boasts more than two cameras but has little else in the way of new imaging features, the Google Pixel 4 looks like it will break new ground – both in transplanting traditional photography techniques as well as software-driven effects.

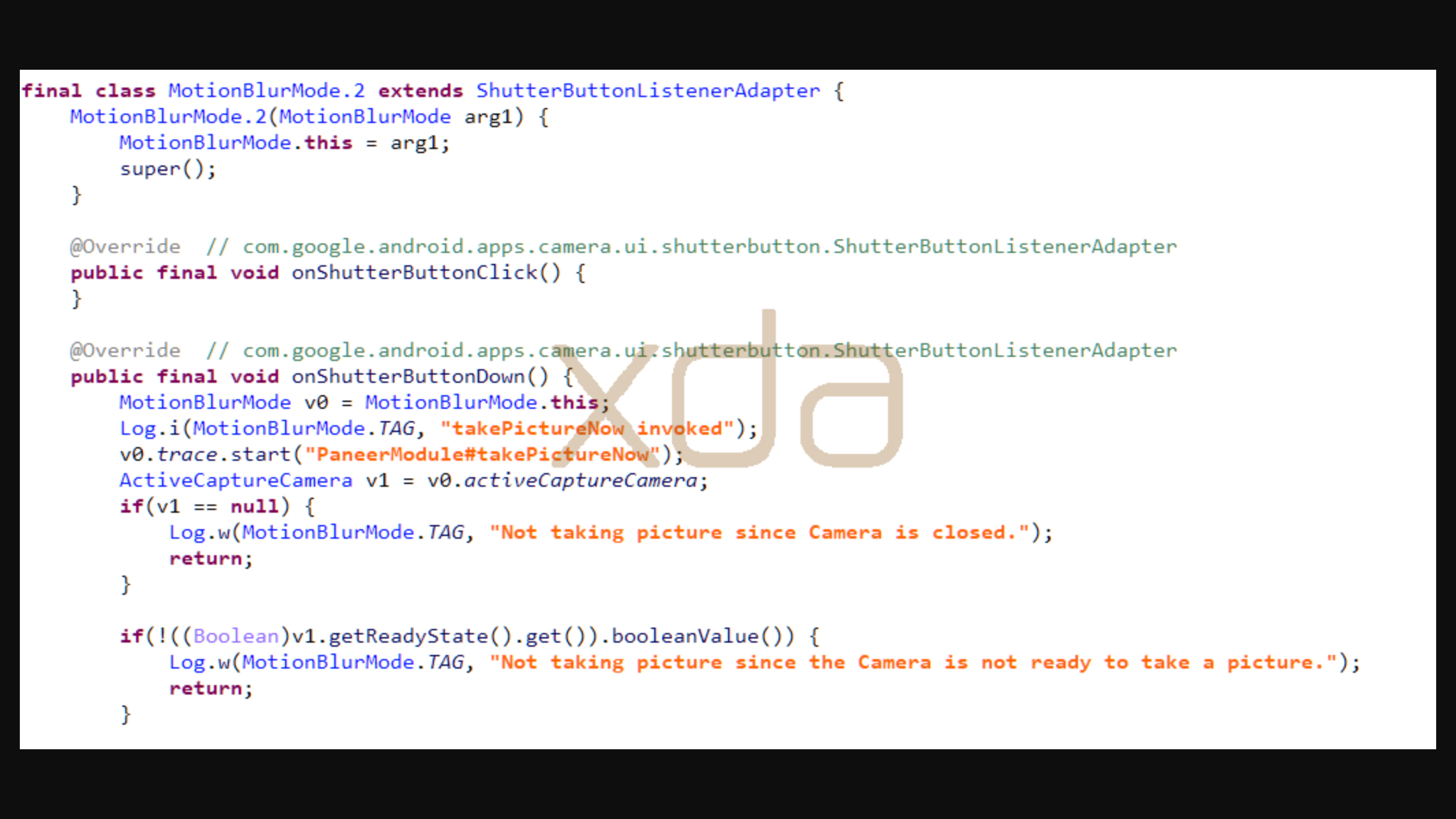

The headline feature will, according to a code dissection of the leaked Google Camera 7.0 by XDA Developers (thanks, DP Review), be Motion Mode. "It’ll supposedly let you take shots of moving subjects in the foreground while blurring the background, perfect for photos of sporting events," says the site, aping the effect of panning photography with traditional cameras.

There's a little more information about the previously leaked Google Pixel 4 astrophotography mode, too. "Google will be using the GPU (the Adreno 640 in the Qualcomm Snapdragon 855) to accelerate segmentation of the sky and then optimize the image by 'finding' the stars and brightening them."

A Live HDR mode was also revealed, which "could be used to apply HDR in real-time to the camera viewfinder, and it may also be used to automatically retouch photos milliseconds after taking them."

The best camera deals, reviews, product advice, and unmissable photography news, direct to your inbox!

It looks like the Pixel 4 will be introducing the audio zoom feature made popular by HTC (which stands to reason, as Google acquired some of HTC's technology and engineers), as well as support for a new file format called DDF: dynamic depth format.

These files record and retain the depth data for photographs, enabling apps to access and manipulate the data without affecting the original file – so, for example, depth of field could be adjusted after the fact by other software.

It all gets us incredibly excited for the Google Pixel 4's arrival in October – and reminds us just how unambitious Apple was with the iPhone 11's photography and imaging features.

Read more:

Google Pixel 4 leak: astrophotography mode is coming!

Google Pixel production withdrawn from China as USA/Trump vs China continues

The best camera phone in 2019: ultimate smartphone cameras compared

James has 25 years experience as a journalist, serving as the head of Digital Camera World for 7 of them. He started working in the photography industry in 2014, product testing and shooting ad campaigns for Olympus, as well as clients like Aston Martin Racing, Elinchrom and L'Oréal. An Olympus / OM System, Canon and Hasselblad shooter, he has a wealth of knowledge on cameras of all makes – and he loves instant cameras, too.